Why you should not „hire AI”

With AI emerging in a few years it became a hot topic. Lots of new terms that has appeared proves we are facing something new. Language is a perfect mirror, that reflects the reality. New terms means reality requires new abstractions to deal with it.

While „vibe coding”, „agentic coding” and other definitions that indicates software development (coding) happens with AI - it does not reflect the entire shift. And it is not yet happening, rather it’s something that stops us.

Why we can’t hire AI?

You shall consider this part as an unprecise human-friendly model, rather then technical description

The problem is every time we refer to AI, we actually mean LLM (Large Language Model) that are following GPT architecture (Generative Pre-trained Transformer). And this is rather the curse, as the main approach could be simplified and capture this way: „make it similar to X”.

At the same time these LLMs need to make many decisions during the process. Because when there are similar results - it can’t be taken as is. It must end with either one option, or calculate a median (average between multiple options). Does this option exist? Nobody knows, it’s „calculated”, but it is possible it might be never a part of this reality. These values are mathematically perfect, but factually inaccurate. That’s what we call hallucations.

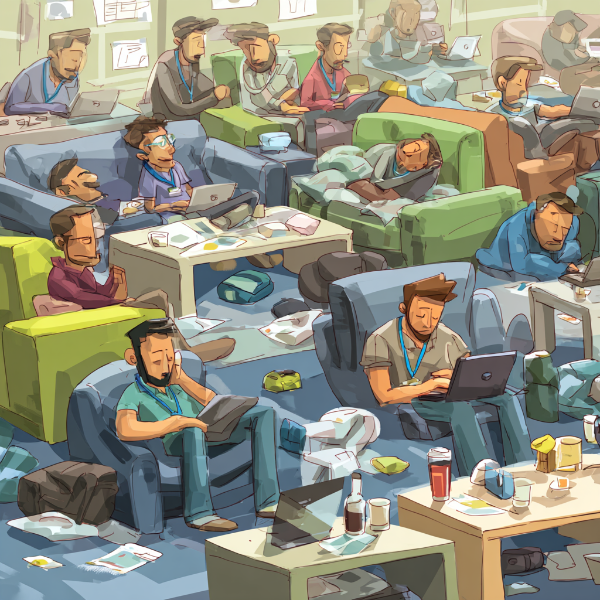

Treating AI as an unexperienced newcomer

Let’s jump to the meat. Once it can’t decide whether something is factually correct or not - we can’t „hire” it. Business has been always valuing experience over formal knowledge. And it happened for a reason. And follow the angle I have presented above - it shall be quite clear why AI can’t be really hired and replace people.

Latest news about company loosing entire database due to AI-agent dropping entire database with the backups - shows exact problem. Wrong decision with zero accountability. Nothing writteng in CLAUDE.md, AGENT.md, guardrails etc. can’t work as hard stop.

When you hire someone and sign contracts, NDA’s and a variety of other paperwork to protect your company - you are still keep ing some doors closed, until person was hired for a very specific job.

The greedy for money and offensiveness against the time has lead entire industry to a moment where bets are higher than ever. Either you’re lucky with the agent and prompt - or you loose entire business. It reminds me gambling. And more and more people are joining this, claiming it’s „innovation”.

Lower pressure or lower the risk

There is nothing wrong with generating code and learning how to do that. But without guys, who set hard-caps, walls, limitations to anyone: devs, team, AI-agents - you’re at risk. And today this risk is much, much more higher. AI don’t have fear and feelings. Fear may motivate individual to doubt their approach and ask for a second opinion. AI don’t care. It must achieve.

If it optimizes for achieving a goal it’s quite easy to imagine a goal where humanity must be exterminated. Luckily AI is still locked in metal and plastic boxes. Until someone decides their ego is worth more than anything else and grant full access to AI.

Despite not every business is a highly regulated, high-stake business it shall not be a reason to ignore safety measures. Light wind may become a hurricane pretty fast.